Everyone has different ideas on what the perfect search-and-rescue robot

is, and for a University of Pennsylvania Mod Lab team, it comes in the

form of a snake drone-quadcopter chimera. The Hybrid Exploration Robot

for Air and Land Deployment or H.E.R.A.L.D.

is composed of two snake-like machines that attach via magnets to a

UAV. After being carried to the site by the quadcopter, the snake bots

can detach themselves, slip through the holes and cracks of a collapsed

building, for instance, and slither to their destination. The

researchers have been working on H.E.R.A.L.D. since 2013, but now that

all its components can properly merge and work together like the robots

in Power Rangers, they presented it at the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems.

You can watch the machine ace the tests its creators put it through in

the vid after the break, including a part where a researcher used an

Xbox controller to navigate a snakebot through a pipe.

Showing posts with label Robots. Show all posts

Showing posts with label Robots. Show all posts

Saturday, 27 September 2014

Wednesday, 24 September 2014

Robot butlers with RFID scanners could be used as service animals

We already use dogs and monkeys as service animals to help people with disabilities, so why not use robots as well? Researchers at Georgia Tech have combined RFID tags, long-distance scanners, and a self-propelled robot to develop a method of reliably locating objects in a real-world setting. With this setup, people with reduced mobility or short-term memory can ask the robot for help finding important items like medicine or documents.

In their research paper, Travis Deyle, Matthew Reynolds, and Charles Kemp explain how they used ultra-high frequency RFID tags to help their custom PR2 robot locate specific items in a complex environment. Items of interest are fixed with unique RFID tags, and the robot has a long-distance RFID scanner mounted on either shoulder. From there, the robot moves about the living space — logging all of the tags it senses. After a lap or two around the house, it can then search out a specific tag, and move toward the item in question.

Monday, 30 June 2014

DARPA's Most Challenging Robot Contest Set for June 2015

Will robots ever be able to save the day in the aftermath of a tsunami or nuclear meltdown? The U.S. military has been trying to find out.

Through its Robotics Challenge, the Pentagon's Defense Advanced Research Projects Agency, or DARPA, has pushed teams of engineers to build machines that can carry out a series of tricky tasks and navigate a grueling obstacle course in a mock disaster zone.

Next year, the ongoing contest will draw to a close with a final round of competition in Southern California, DARPA officials announced today.

Sunday, 13 April 2014

Britain's Ministry of Defence using robot soldier for testing protectvie suits

Porton Man is designed to test protective clothing for the MOD

Image Gallery (3 images)

The British Ministry of Defence (MOD) has a new soldier that costs £1.1 million (US$1.8 million) and goes by the odd name of “Porton Man.” Based at the Defence Science and Technology Laboratory (Dstl) in Porton Down, Wiltshire, Porton Man isn't your average squaddie. He’s a robotic mannequin designed to test suits and equipment for the British armed forces in order to help protect them against chemical and biological weapons.

The threat of nuclear, biological, and chemical weapons (NBC) comes with the territory for modern soldiers. Aside from the horrific effects of the weapons themselves, the mere threat of their use requires military personnel to carry out their duties wearing all-encompassing protective clothing that is hot, suffocating, and restrictive. To improve both effectiveness and durability of NBC suits for British forces, Dstl is responsible for testing them using actual warfare agents.

The problem is that testing a suit’s effectiveness against nerve gas or anthrax is tricky because, like testing a bulletproof vest, it’s not exactly the sort of thing you want to do while someone is wearing it. For decades, the answer has been to use mannequins kitted out with sensors as stand-ins for soldiers both at the Dstl and other places, but mannequins tend to be static affairs that don’t tell you much about how the suit will work while moving about.

This is where Porton Man comes in. It’s name is derived from Porton Down, the location of Dstl, where Britain once developed biological and chemical weapons and is now dedicated to developing ways of detecting and countering them. The robot was designed by i-bodi Technology Ltd and is the only animatronic robot of its type in the UK.

Porton Man is designed to be more than a clothes horse. Suspended in its motorized frame, it’s built to act as a realistic replacement for a soldier for testing purposes. Using advanced lightweight materials derived from Formula 1 racers, the latest animatronics, and kitted out with over 100 sensors, it can determine how well and NBC suit provides protection with walking, marching, running, sitting, kneeling, and even while sighting a weapon while providing scientists with real-time data analysis.

“Our brief was to produce a lightweight robotic mannequin that had a wide range of movement and was easy to handle," says Jez Gibson-Harris, Chief Executive Officer of i-bodi Technology. "Of course there were a number of challenges associated with this and one way we looked to tackle these challenges was through the use of Formula 1 technology. Using the same concepts as those used in racing cars, we were able to produce very light but highly durable carbon composite body parts for the mannequin.”

Saturday, 29 March 2014

Emily The Robot Can Save Lives In The Open Water

Emily The Robot can save lives in the open water. Emily swims fast - actually she flies through the water - in order to save distressed swimmers.

Emily The Robot can save lives in the open water. Emily swims fast - actually she flies through the water - in order to save distressed swimmers.CNN ran broadcast a video on Emily and its naval technology. This summer's model is remote controlled by a human lifeguard onshore, but by next year, the 2011 models will be a full automatic.

At US$3,500, Emily is not an inexpensive alternative to human lifeguards, but she sure travels faster - about six times faster due to her engine and Jet Ski impeller, capable of speeds up to 28 mph.

This may be a wise investment for some open water swim organizations that are focused on safety in large bodies of water - especially as the athletes are stretched out in a conga line along a seashore or lake. Emily has already been distributed to several municipalities.

While a typical triathlon or mass participation open water swim may have too many people in close proximity for Emily to be useful, and we are not sure how Emily will distinguish between a truly distressed swimmer and a mass of inexperienced swimmers towards the back of a pack, but it only takes one saving to make it all worthwhile.

Friday, 21 March 2014

Blue River Technology Takes In Another $10M For Its Agriculture Optimizing Robots

Blue River Technology, which uses robotics and computer vision to optimize agriculture by, for instance, determining which lettuces to thin out of a row and which to keep, has closed a $10 million Series A-1 funding round, led by Data Collective Venture Capital — topping up a$3.1 million Series A it raised back in 2012.

Eric Schmidt’s Innovation Endeavors also joined the round as a new investor, while existing investor Khosla Ventures participated.

Blue River Technology’s main premise is to reduce the use of chemicals in food production by optimizing agricultural methods via robotics systems that can automatically recognize plants and make decisions about which seedlings to thin or identify weeds to eliminate.

The idea is to enable large-scale farms to tend to the needs of individual plants vs the broadcast methods employed by current generation agriculture — which relies on spraying pesticide en masse, because, well, chemicals are dirt cheap. Yet problems with environmental or even food contamination are not factored in to current farming methods, and that’s something Blue River is hoping to change.

CEO and co-founder, Jorge Heraud, told TechCrunch: “Traditional agriculture is very chemical intensive. Chemicals are broadcast applied — a field of many acres, many hectares, gets the same treatment. All plants get fertilized in the same way, all plants get treated with chemical herbicide whether they have weeds or not. Or they get pesticides whether they are sick or not. Everything is just treated the same way.

“The future I see is a future where we use half, a fifth, a tenth of those chemicals by just understanding and putting herbicides only on the plants that need them, by putting fertilization only in the quantities that you need them, putting disease control chemical only on the plants that need it. This can means just a phenomenal amount of savings and efficiency increase and yield increase… We’re calling it ‘making every plant count’.”

Blue River Technology’s first product — currently being used by some eight customers in different parts of the U.S. (and bringing in revenue for the startup) — is designed to precision thin lettuce crops by allowing farmers to set the required spacing between plants. The machine then targets herbicide spray to remove only the plants that aren’t wanted.

Typically this work would usually be done by human thinners — so in this instance Blue River Technology isn’t necessarily reducing chemicals used. But that’s part of its strategy to overcoming the agricultural industry’s over-reliance on herbicides and pesticides generally.

Heraud said the biggest barrier to uptake of robotics optimized agriculture is the low cost (to agriculture) of using chemicals. That’s why it’s started by focusing on specific areas where costs are higher — such as the aforementioned lettuce thinning (being as it’s usually done by human workers who are more expensive to employ than mass spraying).

By targeting its tech at areas that aren’t currently optimised by automation, Blue River hopes to expand into areas that are — and help reduce the chemical load being sprayed onto fields in the process.

“If you’re competing against chemicals right now, or big pieces of automation, it’s pretty hard; the costs are pretty low,” said Heraud. Ergo, the initial strategy to build traction in the agricultural industry is “getting good at the bits where chemicals are not being effective yet”.

Other areas Blue River hopes to target next are portions of agriculture where herbicides have been over-used and led to problems with herbicide-resistant weeds — which in turn requires farmers to use more expensive chemicals to kill them. That makes a precision targeting technological solution potentially more attractive, argues Heraud.

Blue River plans to use its new financing to further expand its engineering team and product offering. Specifically, he said it’s working on improving its lettuce precision thinning machine with a next-gen version of the product in the works that’s wider, faster and more accurate.

It’s also working on extending its plant recognition systems — which is where the computer vision tech comes in — and also on big data techniques for application with row crops such as corn and soybeans, which it said can benefit from phenotyping for crop breeding as well as precise weed control.

Phenotyping refers to the process that seed breeders engage in in propagating plants to produce high quality seed. This is an area where Blue River sees plenty of potential for its technology.

“What this machine would do is look at plants and take very quantitative measurements of how the plant does in different situations — how does it respond to having low nitrogen, how does it respond to having low water, how does it respond to higher temperatures, higher salinity – so the idea is to understand the plant’s physiology better, so you can select better, so it’s a research tool for seed developers,” said Heraud, explaining how Blue River’s computer vision tech could be applied to the phenotyping field.

“It’s a very important tool too — if you think about agriculture and how you can have an impact on agriculture… the work that seed breeders do can have one of the largest impacts on food and food worldwide,” he added.

Commenting on Blue River’s funding in a statement, Vinod Khosla said: “Blue River is taking a very innovative and practical approach to solving one of the world’s top problems – how to produce more food in a responsible way. I’m impressed by how quickly the company brought its first product to market, and how successful it’s been. This high-caliber Blue River team has assembled leading experts spanning both cutting-edge technology and agriculture, and they are in a great position to lead the next gen of environmentally-friendly precision agriculture.”

“Blue River is leveraging three important trends: machine learning, data-driven agriculture and robotics,” added Matt Ocko, founding partner of Data Collective Venture Capital, in a second supporting statement. “This approach has the potential to revolutionize how we produce food in the near future.”

Friday, 14 March 2014

Myth of the ‘real-life Robocop’

Reports that the ultimate crime enforcer may be on our streets soon is largely news hype, says Quentin Cooper. We’re more likely to see Robosnoop, not Robocop.

In the new reboot he’s called the “future of American justice”. In the far superior 1987 original he’s the “future of law enforcement”*. But is Robocop the future of anything?

Both versions of the movie explore how the war against crime might be turned by a man-machine cyborg, programmed to “serve the public trust, protect the innocent, uphold the law”. Even in 1987 this idea of robotically-enhanced policing wasn’t new, at least in fiction –I’m particularly fond of the late 1970s US sitcom Holmes & Yoyo, in which a cop with a habit of leaving his partners in hospital pairs up with an android specially programmed for police work. Since then other TV shows have embraced this premise including Future Cop, Mann & Machine, and most recently ongoing Fox series Almost Human, where in the year 2048 every cop is paired with an android.

Given our fondness both for police dramas and for stories where humans work alongside humanoid machines (Data in Star Trek, David in AI, David in Prometheus, plus many others not brought to you by the letter D) it’s easy to see why television and movie executives keep going back to the same premise. And they’re not the only ones.

Will we ever see Robocops roaming our streets? (MGM/Columbia)

Go a-Googling and you’ll find many, many references to “real-life Robocops” and articles about how police forces and defence agencies are already following in his clanking metal footsteps. This is largely journalistic hyperbole. To the best of my knowledge there is no current research on melding man and circuitry to create cyborg cops. And no-one even has plans to put armed robots on the beat, primed to laser anyone caught littering. What is advancing at a breathtaking pace, though, is the increasing use of automation and autonomy in policing and surveillance. Less Robocop, more Robosnoop.

Several robotics companies already offer a range of “law enforcement machines” – non-humanoid devices often deployed for surveillance in dangerous situations such as getting up close with suspected bombs. That’s the robot as merely a tool, but there are plans to give machines a greater role in policing.

In December, California startup Knightscope unveiled the prototype of their K5 Autonomous Data Machine. An R2-D2 lookalike, it’s designed to combine sensory readings – not just sound and vision but touch and smell – with known social and financial data on its surroundings in order to “predict and prevent crime in your community”. Which puts it almost in the “pre-crime” territory of Spielberg’s Minority Report. If nothing else it’s five feet tall, so that should deter some potential wrong-doers.

Getting even closer to Robocop is the work going on at Florida University International, assessing the viability of hooking up disabled police officers (and soldiers) to “telebots”, so they can control them as they go on patrol.

Again, there’s a long way to go before this kind of technology is close to being deployed. But other advances are already on the street. Or – at least – looking down on the street from above. Although unmanned aircraft have been around for almost a century, it’s only since the original Robocop came out that we’ve become very familiar with the use of drones around the world. Some are purely for remote monitoring using cameras and sensors, others are heavily armed hunter-killers. The unsubtly named Reaper (more formally the MQ-9 Reaper from General Atomics) is already a veteran of numerous combat missions in Afghanistan, Iraq and beyond.

Drones being deployed in warzones and other hotspots are still a long way from the policing-by-machine depicted in Robocop. But wait. Following considerable pressure from the multi-billion-dollar Unmanned Aerial Systems industry, the Federal Aviation Authority (FAA) now have aCongressionally-approved mandate to integrate civilian drones into American airspace, with the FAA themselves estimating there could be “30,000 drones operating by 2020”.

While proponents have flagged up many positive uses – from being a cheaper, quieter alternative to police helicopters right down to them helping get packages and pizza delivered – there are numerous concerns about drone proliferation. Not just the obvious ones about privacy and civil rights, but also safety and security – Reapers and other drones already have a reputation for being accident prone, and there’s also the risk of them more deliberately going out of control through hacking.

If plans go ahead, US authorities estimate there could be 30,000 drones like the MQ-9 Reaper patrolling the skies by 2020 (Getty Images)

If there’s one thing science-fiction warns us about, it’s the potential for anything more sophisticated than a calculator to malfunction with homicidal consequences. So be very wary of computers and robots that are meant to protect us, especially if you’ve given them weaponry. From Skynet in Terminator to the Agents in The Matrix to the Cylons of the reimagined Battlestar Galactica, it’s always the same: smart becomes sentient, sentient becomes belligerent and the machines’ logical conclusion is to wipe out humanity. Or at least enslave us.

That doesn’t mean having ever more drones in our skies or even other more advanced autonomous system will inevitably lead to the Robocalypse. It means that before it’s too late and our skies are full of flying eyes, we need to make decisions about what we stand to lose as well as gain from all this electronic eternal vigilance.

As the original Robocop says: “Your move, creep”.

*Yes, in the original movie it is the ED-209 robot that is originally described as the “future of law enforcement”. But it was also the film’s tagline, and the trailer ended with “Robocop: the future of law enforcement”.

Better Than Human: Why Robots Will — And Must — Take Our Jobs

It’s hard to believe you’d have an economy at all if you gave pink slips to more than half the labor force. But that—in slow motion—is what the industrial revolution did to the workforce of the early 19th century. Two hundred years ago, 70 percent of American workers lived on the farm. Today automation has eliminated all but 1 percent of their jobs, replacing them (and their work animals) with machines. But the displaced workers did not sit idle. Instead, automation created hundreds of millions of jobs in entirely new fields. Those who once farmed were now manning the legions of factories that churned out farm equipment, cars, and other industrial products. Since then, wave upon wave of new occupations have arrived—appliance repairman, offset printer, food chemist, photographer, web designer—each building on previous automation. Today, the vast majority of us are doing jobs that no farmer from the 1800s could have imagined.

- Robots Are Already Replacing Us

- John McAfee’s Last Stand

- Why BioShock Infinite’s Creator Won’t Settle for Success

It may be hard to believe, but before the end of this century, 70 percent of today’s occupations will likewise be replaced by automation. Yes, dear reader, even you will have your job taken away by machines. In other words, robot replacement is just a matter of time. This upheaval is being led by a second wave of automation, one that is centered on artificial cognition, cheap sensors, machine learning, and distributed smarts. This deep automation will touch all jobs, from manual labor to knowledge work.

First, machines will consolidate their gains in already-automated industries. After robots finish replacing assembly line workers, they will replace the workers in warehouses. Speedy bots able to lift 150 pounds all day long will retrieve boxes, sort them, and load them onto trucks. Fruit and vegetable picking will continue to be robotized until no humans pick outside of specialty farms. Pharmacies will feature a single pill-dispensing robot in the back while the pharmacists focus on patient consulting. Next, the more dexterous chores of cleaning in offices and schools will be taken over by late-night robots, starting with easy-to-do floors and windows and eventually getting to toilets. The highway legs of long-haul trucking routes will be driven by robots embedded in truck cabs.

All the while, robots will continue their migration into white-collar work. We already have artificial intelligence in many of our machines; we just don’t call it that. Witness one piece of software by Narrative Science (profiled in issue 20.05) that can write newspaper stories about sports games directly from the games’ stats or generate a synopsis of a company’s stock performance each day from bits of text around the web. Any job dealing with reams of paperwork will be taken over by bots, including much of medicine. Even those areas of medicine not defined by paperwork, such as surgery, are becoming increasingly robotic. The rote tasks of any information-intensive job can be automated. It doesn’t matter if you are a doctor, lawyer, architect, reporter, or even programmer: The robot takeover will be epic.

And it has already begun.

Here’s why we’re at the inflection point: Machines are acquiring smarts.

We have preconceptions about how an intelligent robot should look and act, and these can blind us to what is already happening around us. To demand that artificial intelligence be humanlike is the same flawed logic as demanding that artificial flying be birdlike, with flapping wings. Robots will think different. To see how far artificial intelligence has penetrated our lives, we need to shed the idea that they will be humanlike.

Consider Baxter, a revolutionary new workbot from Rethink Robotics. Designed by Rodney Brooks, the former MIT professor who invented the best-selling Roomba vacuum cleaner and its descendants, Baxter is an early example of a new class of industrial robots created to work alongside humans. Baxter does not look impressive. It’s got big strong arms and a flatscreen display like many industrial bots. And Baxter’s hands perform repetitive manual tasks, just as factory robots do. But it’s different in three significant ways.

First, it can look around and indicate where it is looking by shifting the cartoon eyes on its head. It can perceive humans working near it and avoid injuring them. And workers can see whether it sees them. Previous industrial robots couldn’t do this, which means that working robots have to be physically segregated from humans. The typical factory robot is imprisoned within a chain-link fence or caged in a glass case. They are simply too dangerous to be around, because they are oblivious to others. This isolation prevents such robots from working in a small shop, where isolation is not practical. Optimally, workers should be able to get materials to and from the robot or to tweak its controls by hand throughout the workday; isolation makes that difficult. Baxter, however, is aware. Using force-feedback technology to feel if it is colliding with a person or another bot, it is courteous. You can plug it into a wall socket in your garage and easily work right next to it.

Second, anyone can train Baxter. It is not as fast, strong, or precise as other industrial robots, but it is smarter. To train the bot you simply grab its arms and guide them in the correct motions and sequence. It’s a kind of “watch me do this” routine. Baxter learns the procedure and then repeats it. Any worker is capable of this show-and-tell; you don’t even have to be literate. Previous workbots required highly educated engineers and crack programmers to write thousands of lines of code (and then debug them) in order to instruct the robot in the simplest change of task. The code has to be loaded in batch mode, i.e., in large, infrequent batches, because the robot cannot be reprogrammed while it is being used. Turns out the real cost of the typical industrial robot is not its hardware but its operation. Industrial robots cost $100,000-plus to purchase but can require four times that amount over a lifespan to program, train, and maintain. The costs pile up until the average lifetime bill for an industrial robot is half a million dollars or more.

The third difference, then, is that Baxter is cheap. Priced at $22,000, it’s in a different league compared with the $500,000 total bill of its predecessors. It is as if those established robots, with their batch-mode programming, are the mainframe computers of the robot world, and Baxter is the first PC robot. It is likely to be dismissed as a hobbyist toy, missing key features like sub-millimeter precision, and not serious enough. But as with the PC, and unlike the mainframe, the user can interact with it directly, immediately, without waiting for experts to mediate—and use it for nonserious, even frivolous things. It’s cheap enough that small-time manufacturers can afford one to package up their wares or custom paint their product or run their 3-D printing machine. Or you could staff up a factory that makes iPhones.

Baxter was invented in a century-old brick building near the Charles River in Boston. In 1895 the building was a manufacturing marvel in the very center of the new manufacturing world. It even generated its own electricity. For a hundred years the factories inside its walls changed the world around us. Now the capabilities of Baxter and the approaching cascade of superior robot workers spur Brooks to speculate on how these robots will shift manufacturing in a disruption greater than the last revolution. Looking out his office window at the former industrial neighborhood, he says, “Right now we think of manufacturing as happening in China. But as manufacturing costs sink because of robots, the costs of transportation become a far greater factor than the cost of production. Nearby will be cheap. So we’ll get this network of locally franchised factories, where most things will be made within 5 miles of where they are needed.”

That may be true of making stuff, but a lot of jobs left in the world for humans are service jobs. I ask Brooks to walk with me through a local McDonald’s and point out the jobs that his kind of robots can replace. He demurs and suggests it might be 30 years before robots will cook for us. “In a fast food place you’re not doing the same task very long. You’re always changing things on the fly, so you need special solutions. We are not trying to sell a specific solution. We are building a general-purpose machine that other workers can set up themselves and work alongside.” And once we can cowork with robots right next to us, it’s inevitable that our tasks will bleed together, and soon our old work will become theirs—and our new work will become something we can hardly imagine.

To understand how robot replacement will happen, it’s useful to break down our relationship with robots into four categories, as summed up in this chart:

The rows indicate whether robots will take over existing jobs or make new ones, and the columns indicate whether these jobs seem (at first) like jobs for humans or for machines.

Let’s begin with quadrant A: jobs humans can do but robots can do even better. Humans can weave cotton cloth with great effort, but automated looms make perfect cloth, by the mile, for a few cents. The only reason to buy handmade cloth today is because you want the imperfections humans introduce. We no longer value irregularities while traveling 70 miles per hour, though—so the fewer humans who touch our car as it is being made, the better.

And yet for more complicated chores, we still tend to believe computers and robots can’t be trusted. That’s why we’ve been slow to acknowledge how they’ve mastered some conceptual routines, in some cases even surpassing their mastery of physical routines. A computerized brain known as the autopilot can fly a 787 jet unaided, but irrationally we place human pilots in the cockpit to babysit the autopilot “just in case.” In the 1990s, computerized mortgage appraisals replaced human appraisers wholesale. Much tax preparation has gone to computers, as well as routine x-ray analysis and pretrial evidence-gathering—all once done by highly paid smart people. We’ve accepted utter reliability in robot manufacturing; soon we’ll accept it in robotic intelligence and service.

Next is quadrant B: jobs that humans can’t do but robots can. A trivial example: Humans have trouble making a single brass screw unassisted, but automation can produce a thousand exact ones per hour. Without automation, we could not make a single computer chip—a job that requires degrees of precision, control, and unwavering attention that our animal bodies don’t possess. Likewise no human, indeed no group of humans, no matter their education, can quickly search through all the web pages in the world to uncover the one page revealing the price of eggs in Katmandu yesterday. Every time you click on the search button you are employing a robot to do something we as a species are unable to do alone.

While the displacement of formerly human jobs gets all the headlines, the greatest benefits bestowed by robots and automation come from their occupation of jobs we are unable to do. We don’t have the attention span to inspect every square millimeter of every CAT scan looking for cancer cells. We don’t have the millisecond reflexes needed to inflate molten glass into the shape of a bottle. We don’t have an infallible memory to keep track of every pitch in Major League Baseball and calculate the probability of the next pitch in real time.

We aren’t giving “good jobs” to robots. Most of the time we are giving them jobs we could never do. Without them, these jobs would remain undone.

Now let’s consider quadrant C, the new jobs created by automation—including the jobs that we did not know we wanted done. This is the greatest genius of the robot takeover: With the assistance of robots and computerized intelligence, we already can do things we never imagined doing 150 years ago. We can remove a tumor in our gut through our navel, make a talking-picture video of our wedding, drive a cart on Mars, print a pattern on fabric that a friend mailed to us through the air. We are doing, and are sometimes paid for doing, a million new activities that would have dazzled and shocked the farmers of 1850. These new accomplishments are not merely chores that were difficult before. Rather they are dreams that are created chiefly by the capabilities of the machines that can do them. They are jobs the machines make up.

Before we invented automobiles, air-conditioning, flatscreen video displays, and animated cartoons, no one living in ancient Rome wished they could watch cartoons while riding to Athens in climate-controlled comfort. Two hundred years ago not a single citizen of Shanghai would have told you that they would buy a tiny slab that allowed them to talk to faraway friends before they would buy indoor plumbing. Crafty AIs embedded in first-person-shooter games have given millions of teenage boys the urge, the need, to become professional game designers—a dream that no boy in Victorian times ever had. In a very real way our inventions assign us our jobs. Each successful bit of automation generates new occupations—occupations we would not have fantasized about without the prompting of the automation.

To reiterate, the bulk of new tasks created by automation are tasks only other automation can handle. Now that we have search engines like Google, we set the servant upon a thousand new errands. Google, can you tell me where my phone is? Google, can you match the people suffering depression with the doctors selling pills? Google, can you predict when the next viral epidemic will erupt? Technology is indiscriminate this way, piling up possibilities and options for both humans and machines.

It is a safe bet that the highest-earning professions in the year 2050 will depend on automations and machines that have not been invented yet. That is, we can’t see these jobs from here, because we can’t yet see the machines and technologies that will make them possible. Robots create jobs that we did not even know we wanted done.

Finally, that leaves us with quadrant D, the jobs that only humans can do—at first. The one thing humans can do that robots can’t (at least for a long while) is to decide what it is that humans want to do. This is not a trivial trick; our desires are inspired by our previous inventions, making this a circular question.

When robots and automation do our most basic work, making it relatively easy for us to be fed, clothed, and sheltered, then we are free to ask, “What are humans for?” Industrialization did more than just extend the average human lifespan. It led a greater percentage of the population to decide that humans were meant to be ballerinas, full-time musicians, mathematicians, athletes, fashion designers, yoga masters, fan-fiction authors, and folks with one-of-a kind titles on their business cards. With the help of our machines, we could take up these roles; but of course, over time, the machines will do these as well. We’ll then be empowered to dream up yet more answers to the question “What should we do?” It will be many generations before a robot can answer that.

This postindustrial economy will keep expanding, even though most of the work is done by bots, because part of your task tomorrow will be to find, make, and complete new things to do, new things that will later become repetitive jobs for the robots. In the coming years robot-driven cars and trucks will become ubiquitous; this automation will spawn the new human occupation of trip optimizer, a person who tweaks the traffic system for optimal energy and time usage. Routine robo-surgery will necessitate the new skills of keeping machines sterile. When automatic self-tracking of all your activities becomes the normal thing to do, a new breed of professional analysts will arise to help you make sense of the data. And of course we will need a whole army of robot nannies, dedicated to keeping your personal bots up and running. Each of these new vocations will in turn be taken over by robots later.

The real revolution erupts when everyone has personal workbots, the descendants of Baxter, at their beck and call. Imagine you run a small organic farm. Your fleet of worker bots do all the weeding, pest control, and harvesting of produce, as directed by an overseer bot, embodied by a mesh of probes in the soil. One day your task might be to research which variety of heirloom tomato to plant; the next day it might be to update your custom labels. The bots perform everything else that can be measured.

Right now it seems unthinkable: We can’t imagine a bot that can assemble a stack of ingredients into a gift or manufacture spare parts for our lawn mower or fabricate materials for our new kitchen. We can’t imagine our nephews and nieces running a dozen workbots in their garage, churning out inverters for their friend’s electric-vehicle startup. We can’t imagine our children becoming appliance designers, making custom batches of liquid-nitrogen dessert machines to sell to the millionaires in China. But that’s what personal robot automation will enable.

Everyone will have access to a personal robot, but simply owning one will not guarantee success. Rather, success will go to those who innovate in the organization, optimization, and customization of the process of getting work done with bots and machines. Geographical clusters of production will matter, not for any differential in labor costs but because of the differential in human expertise. It’s human-robot symbiosis. Our human assignment will be to keep making jobs for robots—and that is a task that will never be finished. So we will always have at least that one “job.”

In the coming years our relationships with robots will become ever more complex. But already a recurring pattern is emerging. No matter what your current job or your salary, you will progress through these Seven Stages of Robot Replacement, again and again:

- 1. A robot/computer cannot possibly do the tasks I do.[Later.]

- 2. OK, it can do a lot of them, but it can’t do everything I do.[Later.]

- 3. OK, it can do everything I do, except it needs me when it breaks down, which is often.[Later.]

- 4. OK, it operates flawlessly on routine stuff, but I need to train it for new tasks.[Later.]

- 5. OK, it can have my old boring job, because it’s obvious that was not a job that humans were meant to do.[Later.]

- 6. Wow, now that robots are doing my old job, my new job is much more fun and pays more![Later.]

- 7. I am so glad a robot/computer cannot possibly do what I do now.

This is not a race against the machines. If we race against them, we lose. This is a race with the machines. You’ll be paid in the future based on how well you work with robots. Ninety percent of your coworkers will be unseen machines. Most of what you do will not be possible without them. And there will be a blurry line between what you do and what they do. You might no longer think of it as a job, at least at first, because anything that seems like drudgery will be done by robots.

We need to let robots take over. They will do jobs we have been doing, and do them much better than we can. They will do jobs we can’t do at all. They will do jobs we never imagined even needed to be done. And they will help us discover new jobs for ourselves, new tasks that expand who we are. They will let us focus on becoming more human than we were.

Let the robots take the jobs, and let them help us dream up new work that matters.

Friday, 7 March 2014

The latest robot brain

A researcher at Missouri University of Science and Technology has developed a new feedback system to remotely control mobile robots. This innovative research will allow robots to operate with minimal supervision and could eventually lead to a robot that can learn or even become autonomous.

The research, developed by Dr. Jagannathan Sarangapani, makes use of current formation moving robots and introduces a fault-tolerant control design to improve the probability of completing a set task. Currently there is a lot of potential growth in this field, as very few robotic systems have this redundancy because of costs.

The new feedback system, funded in part by the National Science Foundation, will allow a "follower" robot to take over as the "leader" robot if the original leader has a system or mechanical failure. In a leader/follower formation, the lead robot is controlled through a nonholonomic system, meaning that the trajectory is set in advance, and the followers are tracing the same pattern that the leader takes by using sonar.

When a problem occurs and roles need to change to continue, the fault tolerant control system comes into use. It uses reinforcement learning and active critique, both inspired by behaviorist psychology to show how machines act in environments to maximize work rate, to help the new, unmanned robot to estimate its new course. Without this, the follower wouldn't have a path to follow and the task would fail.

"Imagine you have one operator in an office controlling 10 bulldozers remotely," says Sarangapani, the William A. Rutledge -- Emerson Electric Co. Distinguished Professor in Electrical Engineering at S&T. "In the event that the lead one suffers a mechanical problem, this hardware allows the work to continue."

The innovative research can be applied to robotic security surveillance, mining and even aerial maneuvering. With the growing number of sinkholes appearing across the country, surveying them in a safe and efficient way is now possible with a remotely controlled machine that can also survive the terrain.

Sarangapani believes that the research is most important for aerial vehicles. When a helicopter is in flight, faults can now be detected and accommodated. This means that instead of a catastrophic failure resulting in a potentially fatal crash, the system can allow for a better chance for an emergency landing instead. The fault tolerance would notice a problem and essentially shut down that malfunctioning part while maintaining slight control of the overall vehicle.

"The end goal is to push robotics to the next level," says Sarangapani. "I want robots to think for themselves, to learn, adapt and use active critique to work unsupervised. A self-aware robot will eventually be here, it is just a matter of time."

Monday, 10 February 2014

Google's drive into robotics should concern us all

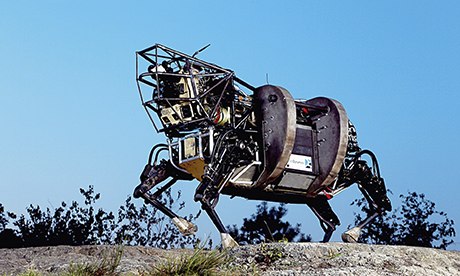

It runs at 4mph, it can toss breeze blocks, it's Boston Dynamics's Big Dog. Photograph: Boston Dynamics

You may not have noticed it, but over the past year Google has bought eight robotics companies. Its most recent acquisition is an outfit calledBoston Dynamics, which makes the nearest thing to a mechanical mule that you are ever likely to see. It's called Big Dog and it walks, runs, climbs and carries heavy loads. It's the size of a large dog or small mule – about 3ft long, 2ft 6in tall, weighs 240lbs, has four legs that are articulated like an animal's, runs at 4mph, climbs slopes up to 35 degrees, walks across rubble, climbs muddy hiking trails, walks in snow and water, carries a 340lb load, can toss breeze blocks and can recover its balance when walking on ice after absorbing a hefty sideways kick.You don't believe me? Well, just head over to YouTube and search for "Boston Dynamics". There, you will find not only a fascinating video of Big Dog in action, but also confirmation that its maker has a menagerie of mechanical beasts, some of them humanoid in form, others resembling predatory animals. And you will not be surprised to learn that most have been developed on military contracts, including some issued by Darpa, the Defence Advanced Research Projects Agency, the outfit that originally funded the development of the internet.Should we be concerned about this? Yes, but not in the way you might first think. The notion that Google is assembling a droid army that will one day give it a Star Wars capability seems implausible (even if we make allowances for the fact that its mobile software is called android). No; what makes the robotics acquisitions interesting is what they reveal about the scale of Google's ambitions. For this is a company whose like we have not seen before.Google is run by two youngish men, Larry Page and Sergey Brin, who are, in a literal sense, visionaries. Google's shareholding structure gives them untrammelled power: while other companies have to fret about the opinion of Wall Street, quarterly results etc, the Google boys can do as they please. Where most public companies – and all governments – have nowadays little desire for risk, they appear to have an insatiable appetite for it. One reason for this is that Google's dominance of search and online advertising provides a continuous flow of unimaginable revenues. The company exercises a gravitational pull on the most talented intellects in their field – software. Since software is pure thought-stuff, collective IQ is all that matters in this business. And Google has it in spades.What drives the Google founders is an acute understanding of the possibilities that long-term developments in information technology have deposited in mankind's lap. Computing power has been doubling every 18 months since 1956. Bandwidth has been tripling and electronic storage capacity has been quadrupling every year. Put those trends together and the only reasonable inference is that our assumptions about what networked machines can and cannot do need urgently to be updated.Most of us, however, have failed to do that and have, instead, clung wistfully to old certainties about the unique capabilities (and therefore superiority) of humans. Thus we assumed that the task of safely driving a car in crowded urban conditions would be, for the foreseeable future, a task that only we could do. Similarly, we imagined that real-time translation between two languages would remain the exclusive preserve of humans. And so on.What makes the Google boys so distinctive is not the fact that they didupdate their assumptions about what machines can and cannot do (because many people in the field were aware of what was becoming possible) but that they possessed the limitless resources needed to explore and harness those new possibilities. Hence the self-driving car, MOOCs, the Google books project, the free gigabit connectivity project, the X labs and so on…And these are just for starters. A few months ago, an astute technology commentator, Jason Calcanis, set out what he saw as Google's to-do list. Here's what he came up with: free gigabit internet access for everyone for life; mastering Big Data, machine learning and quantum computing; dominating wearable – and implantable – computing; becoming a huge venture capitalist and developing new kinds of currency (a la Bitcoin); becoming the world's biggest media company; revolutionising healthcare and technologies for life extension; alternative energy technologies; and transforming transportation.If any other company had a to-do list such as this we would have its executives sectioned under the Mental Health Act. And it's possible, I suppose, that the Google founders are indeed nuts. But I wouldn't bet on it, which is why we ought to be concerned. Because if even a fraction of the company's ambitions eventually come to fruition, Google will become one of the most powerful corporations on Earth. And we know what Lord Acton would have said about that.

Saturday, 30 November 2013

Copycat Russian android prepares to do the spacewalk

This robot is looking pretty pleased with itself – and wouldn't you be, if you were off to the International Space Station? Prototype cosmobot SAR-401, with its human-like torso, is designed to service the outside of the ISS by mimicking the arm and finger movements of a human puppet-master indoors.In this picture, that's the super-focussed guy in the background but in space it would be a cosmonaut operating from the relative safety of the station's interior and so avoiding a risky spacewalk. You can watch the Russian android mirroring a human here.SAR-1 joins a growing zoo of robots in space. NASA already has its ownRobonaut on board the ISS to carry out routine maintenance tasks. It was recently joined by a small, cute Japanese robot, Kirobo, but neither of the station's droids are designed for outside use.Until SAR-401 launches, the station's external Dextre and Canadarm2 rule the orbital roost. They were commemorated on Canadian banknotes this year – and they don't even have faces.

Friday, 29 November 2013

How To Switch From iPhone To Android

Which is better iPhone or Android smartphones? Well it is a never-ending debate. Each side has its advantages and disadvantages but you simply can’t say one is better than the other. But those of you who have decided to change their sides; you might have a little help from the ex-CEO of Google – Eric Schmidt. Today he posted a complete how-to guide on switching from iPhone to Android.

iPhone To Android

Switching to a completely different operating system can be a bit of hassle for many people around the world, especially once they have gotten used to a specific one. Well there are lots of guides on helping you switch from iPhone to Android or vice versa. But when you get one directly from the ex-CEO of Google, it sure raises some major questions.

Eric Schmidt helped propel Google to what it is today, making it one of the most innovative tech companies in the world. Well, today he is helping Google in another way.

In a Google+ post, Eric Schmidt wrote an extensive guide on how to take the leap of faith. Those of you who are deciding to switch from iPhone to Android, can view the entireguide.

Here is an excerpt from it:

1. Set up the Android phone

a) Power on, connect to WiFi, login with your personal Gmail account, and download in the Google Play Store all the applications you normally use (for example, Instagram).b) Make sure the software on the Android phone is updated to the latest version (i.e. 4.3 or 4.4). You should get a notification if there are software updates.

c) If you are using AT&T, download the Visual Voicemail app from the Play Store.

d) You can add additional Gmail accounts now or later.

So those of you who want to switch from iPhone to Android can read the entire post. Well, will you be making the switch?

Subscribe to:

Comments (Atom)